The science of how AI picks its sources

I analyzed over 21K citations to understand the impact of content length, depth and focus.

This Memo was sent to 25,523 subscribers. Welcome to +148 new readers! Subscribe to get the free memo weekly or upgrade to Premium for the full archive, research, frameworks, and templates.

In The science of how AI pays attention, I analyzed 1.2 million ChatGPT responses to understand exactly how AI reads a page. This is Part 2.

Where Part 1 told you where on a page AI looks, this one tells you which pages AI routinely considers.

The data clarifies:

Why ~30 domains own 67% of citations in any topic

The page structure that earns citations across 50+ distinct queries vs. the one that gets cited once

Whether the ski ramp from Part 1 is actually steeper or flatter in your vertical

Premium subscribers get a checklist to integrate the study results into their workflows.

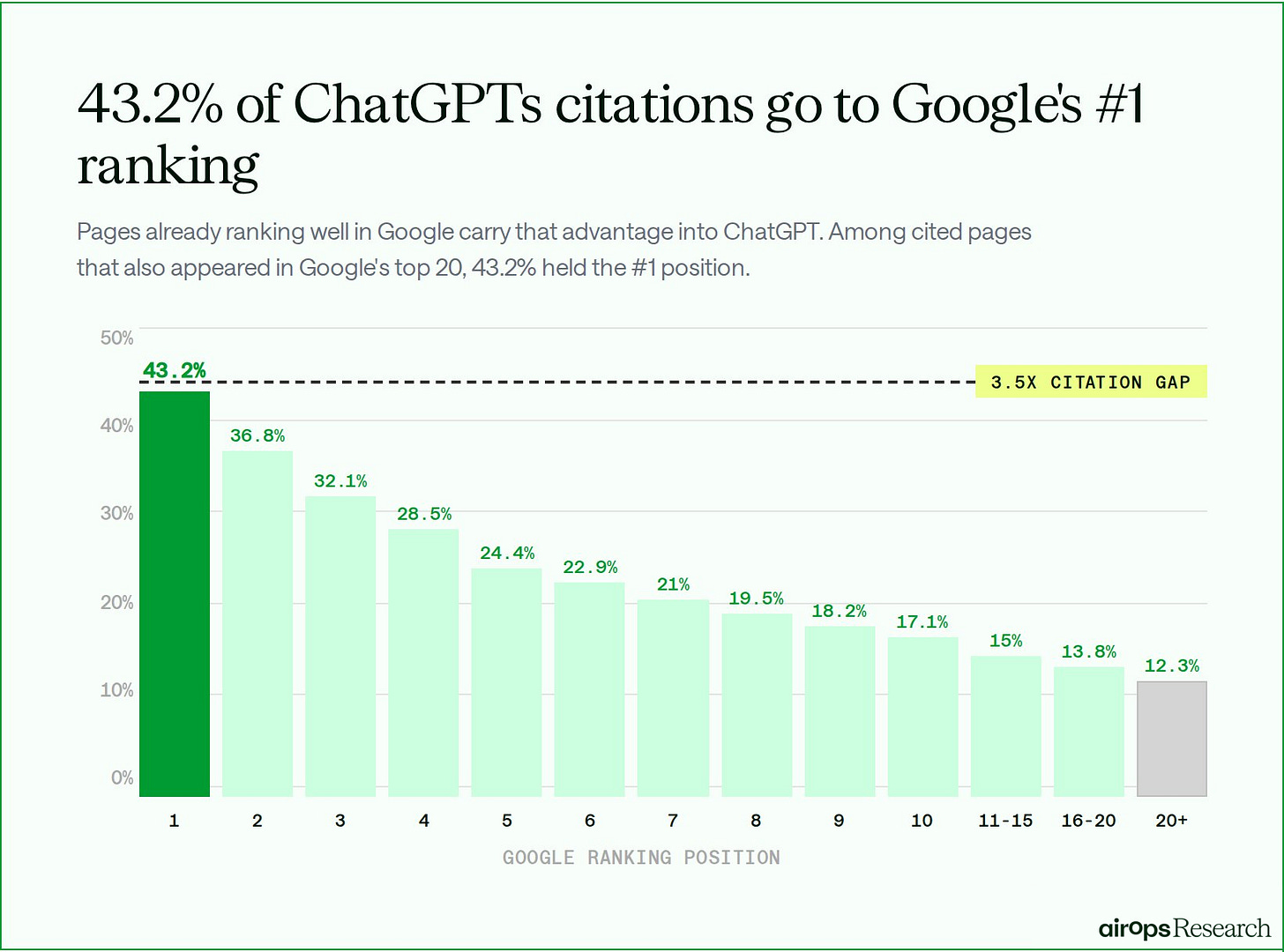

Among pages ranking #1 in Google, 43.2% were cited by ChatGPT. That was 3.5 times higher than the citation rate for pages ranking beyond Google’s top 20 results.

ChatGPT retrieves about 6x more pages than it cites.

In research across 548,534 retrieved pages and 15,000 prompts, AirOps found:

85% of pages ChatGPT retrieved were never cited.

⅓ of cited pages came from fan-out queries, and 95% of those had zero search volume.

Among pages ranking #1 in Google, 43.2% were cited by ChatGPT. That was 3.5x higher than the citation rate for pages ranking beyond Google’s top 20 results.

Ranking well helps, but it doesn’t guarantee citations.

AirOps surfaces these fan-out queries so teams can see the full search path ChatGPT uses to build an answer and act on it.

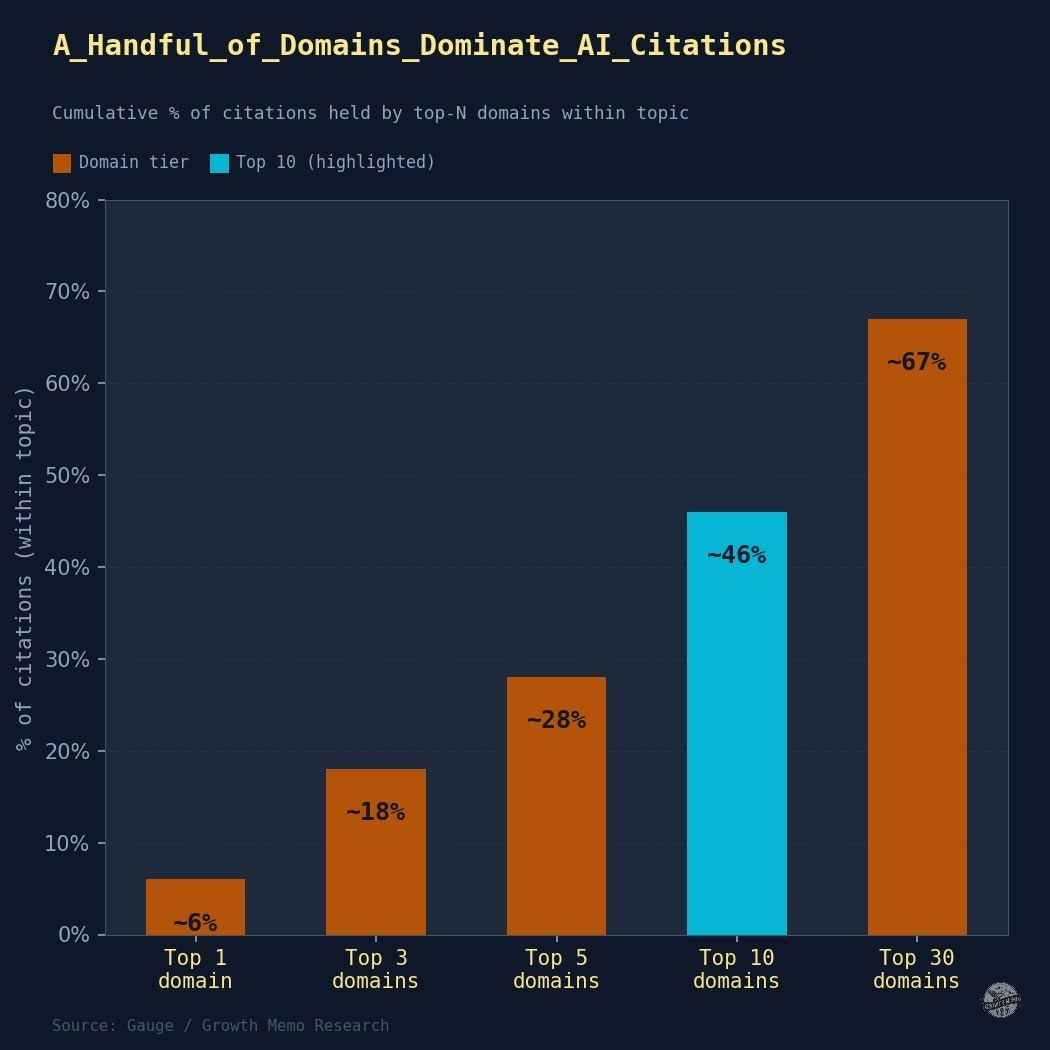

1/ ~30 domains own 67% of AI citations per topic.

Classic search is a winner-takes-all game. The top result gets disproportionately more clicks than the second. Is that also true for ChatGPT answers? Is the distribution of cited domains democratic or totalitarian?

Approach:

Compute the citation share per domain per vertical

Calculate the cumulative share captured by the top 10% of domains

Dataset: 21,482 ChatGPT citation rows, 670 unique domains, 2,344 unique URLs, 127 unique prompts

Results: The top 10 domains take 46% of all citations in a topic. The top 30 take 67%.

AI citation is slightly less concentrated than traditional organic search, but still extreme:

Effectively, there are ~30 seats (domains) at the citation table for any given topic. Everything else is nearly invisible.

Example: storylane.io appears as a cited source across 102 distinct prompts (unique questions asked of ChatGPT), reprise.com across 98. Even though reprise.com has more total citations (1,369 vs. storylane.io’s 968), storylane.io shows up in answers to a broader range of different questions.

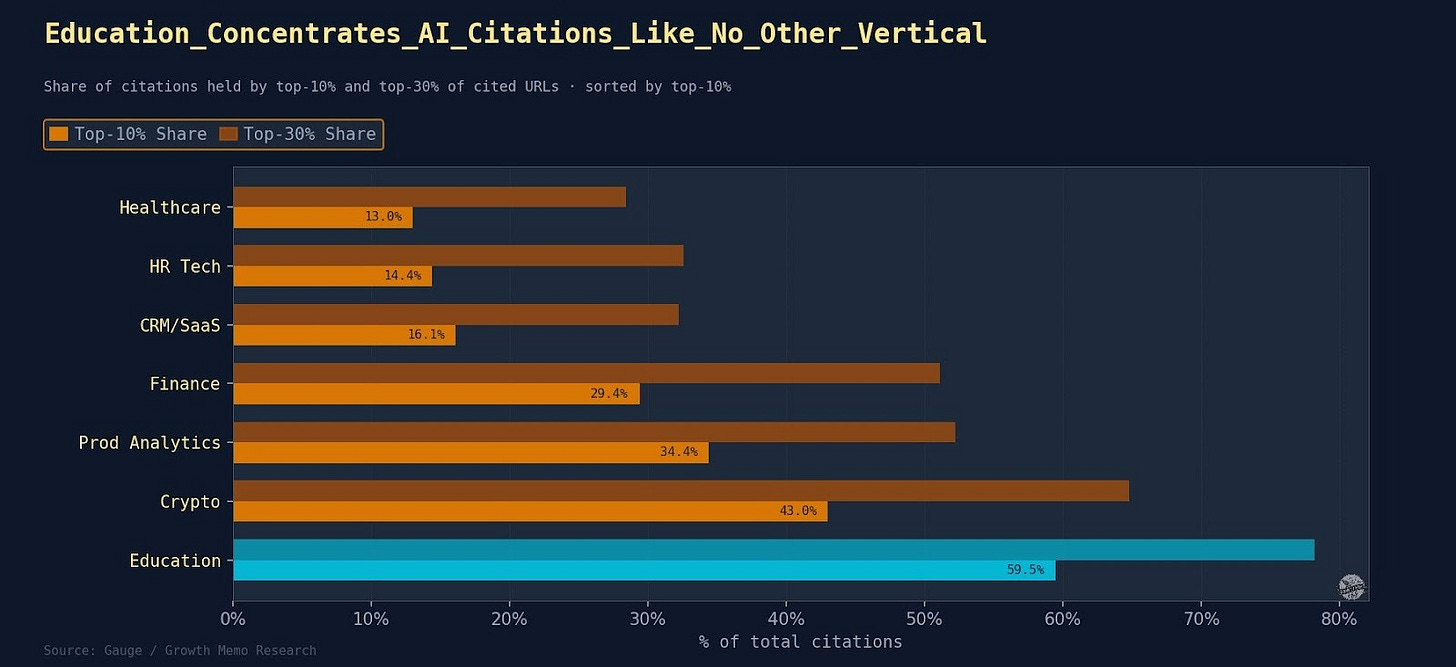

We confirmed these findings in product-comparison verticals (SaaS tools, financial advisors). However, you’ll see below that the pattern is weaker in healthcare and open web topics, where no single domain dominates. Notably, the education sector receives the most AI citations of any vertical we studied.

What the industry patterns showed:

The findings above are from product comparison verticals (SaaS, financial advisors), but the pattern is weaker in healthcare and open web topics, where no single domain dominates, and stronger in the education sector.

Education is winner-take-most: the top 10% of domains capture 59.5% of all citations.

If you are not already in the top 5-10 domains in education, achieving citation breadth is exceptionally hard.

tefl.org alone answers 102 unique prompts and holds 18.75% of all Education citations. The next three domains (internationalteflacademy.com 7.83%, gooverseas.com 5.87%, reddit.com 5.22%) leave the top 3 controlling about 32% of all citations.

Crypto is the second most concentrated at 43.0% for the top 10%.

A small set of technical documentation and comparison sites (alchemy.com, quicknode.com, chainstack.com) dominate Solana RPC and infrastructure queries.

The technical nature of Solana queries means few credible sources exist; once a domain earns trust in this niche, it captures a large share.

Finance sits at 29.4% for top-10%.

Concentration is query-type specific: Financial advisor locator pages (forfiduciary.com at 139 unique prompts, smartasset.com at 168 unique prompts) dominate city-level advisor queries.

But the long tail of financial product queries keeps total concentration moderate.

Healthcare is the least concentrated at 13.0% for the top 10%.

No single domain dominates. New entrants have a realistic path to citation reach.

The citation surface is spread across hundreds of domains, each covering a small slice of telehealth, HIPAA compliance, and healthcare app queries.

CRM/SaaS and HR Tech are similarly diffuse (16.1% and 14.4% top-10%).

These are multi-product software categories where dozens of comparison sites, review platforms, and vendor pages split citations.

monday.com leads CRM with only 2.88% of all citations (37 unique prompts). A genuinely open competitive field

Top takeaways:

1/ Breadth of topic coverage matters more than domain authority. A single well-structured comparison page (learn.g2.com: 65 unique prompts, 495 citations) can still outperform the entire domain portfolio of a well-known brand. The goal is not to rank for one query, but to answer a cluster.

2/ Concentration reflects category maturity. Fragmentation is an opportunity. Education and Crypto have narrow, well-defined query spaces where a few authoritative sources have locked in trust. Healthcare and CRM are broad, fragmented categories where no single domain dominates. That fragmentation is your opening.

3/ Citation reach (the number of distinct prompts a domain answers) is a more useful strategic metric than raw citation count. In low-concentration verticals like Healthcare and CRM, a focused 30-50 page strategy can realistically compete for a seat at the table. In high-concentration verticals like Education and Crypto, the path is narrower: become the definitive resource on a specific sub-topic or accept that you’re fighting for scraps.

2/ The citation advantage starts at 10,000 words.

In classic Search, word count and page length are somewhat indicative of ranks, as long as the quality is high. I wondered, again, if that is also true for showing up in ChatGPT answers?

Approach:

Measure raw text length of every cited page

Group length into 7 buckets

For each bucket, calculate average citations per page

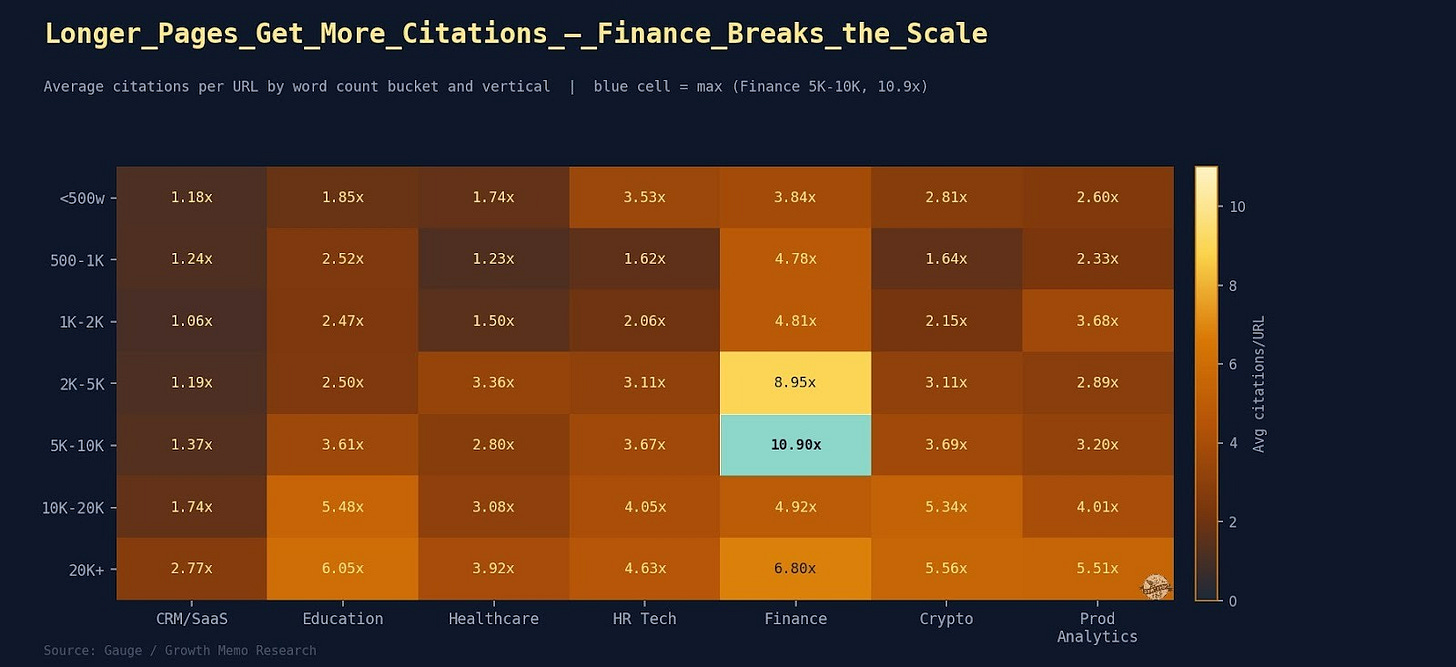

Results: More words do indeed correlate with more citations, but there’s a ceiling.

The 5K-to-10K jump is the largest single step - nearly 2x. Pages above 20,000 characters average 10.18 citations each vs. 2.39 for pages under 500 characters.

What the industry patterns showed:

The length effect is vertical-specific: Finance inverts it entirely. High-cited Finance pages average 1,783 words vs. 2,084 for low-cited pages - a 0.86x lift. Authoritative compact sources, rate tables, and regulatory summaries outperform comprehensive guides there. The 10,000-character rule holds for SaaS and editorial content.

Finance peaks at 5K-10K words (10.9 citations/page), then drops sharply at 10K-20K (4.92 citations/page).

Finance also shows the steepest absolute gain: Pages under 500 words earn only 3.84 citations/page while 5K-10K pages earn 10.9, which is a 2.8x multiplier from length optimization alone.

Very long Finance pages may dilute the citation-triggering content with redundant detail.

Education shows the clearest length-wins-everything pattern.

Citations per page climb steadily from 1.85 (under 500 words) to 6.05 (20K+ words) with no drop-off.

Crypto and Product Analytics behave similarly to Education.

Length consistently pays off, plateauing around the 10K-20K tier (5.34 and 4.01, respectively). Both are technical verticals where comprehensiveness signals authority.

SaaS shows the weakest length effect: Citations per page ranges from 1.06 (1K-2K words) to 2.77 (20K+ words).

Even the longest CRM pages only get 2.77 citations per page on average.

In this vertical, length alone does not determine citations. Format, structure, and domain authority appear more important.

Healthcare shows a moderate length effect (1.74 to 3.92 citations/page).

But with one anomaly: 5K-10K words (2.80) underperforms vs. 2K-5K words (3.36).

Very long Healthcare pages may include too much clinical detail that dilutes citation-triggering content.

Top takeaways:

1/ Universal finding: Very short pages (under 1K words) underperform in every vertical. The underperformance of thin content is consistent, but the reward for long content is vertical-specific.

2/ Target your length based on industry, content type, and query intent, not a universal word count. For Finance verticals: Aim for 5K-10K words. Education, Crypto, and Product Analytics: Go as long as possible. CRM/SaaS: Prioritize structure over word count.

58% of cited URLs are cited once.

When we look at the citations within a topic, we often see many pages on a domain getting cited. So, how many citations can a single page get?

Approach:

Count the number of unique prompts for each page

Classify number of citations into: 1, 2-5, 6-10, 11+

Inspect the top URLs per vertical for structural patterns

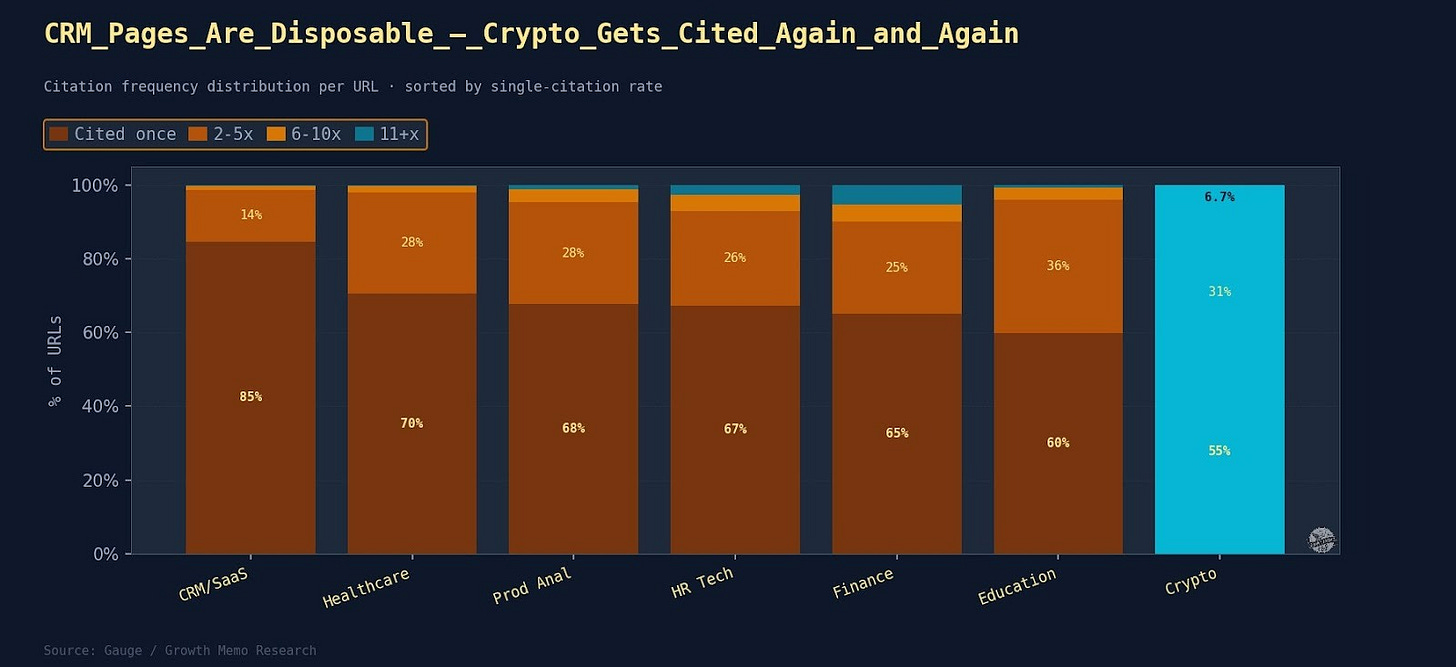

Results: On average, 67% of cited URLs appear in only one prompt.

Think of it like a footprint game. Raw citation count tells you how popular a page is. Citation breadth tells you how strategically valuable it is. An evergreen page in AI citation is not one that gets cited a lot; it is one that keeps appearing across diverse queries.

The top 4.8% of URLs (cited 10+) are all category-level comparisons or guides answering “what is it,” “who uses it,” “how to choose,” and “pricing” in a single URL.

What the industry patterns showed:

The citation pool isn’t a meritocracy of the best answer, but the degree varies sharply.

CRM/SaaS has the highest one-hit rate at 84.7%.

Finance produces the highest-reach evergreen pages: forfiduciary.com covers 119 unique prompts.

Crypto generates the most concentrated evergreen pages at 55.4% in the technical tier: chainstack.com/best-solana-rpc-providers-in-2026 (63 prompts), alchemy.com/overviews/solana-rpc (62 prompts), and rpcfast.com/blog/rpc-node-providers (61 prompts). All three are comparison pages covering the Solana RPC provider landscape from slightly different angles.

Education evergreen pages follow a different logic: tefl.org, internationalteflacademy.com, and gooverseas.com get cited broadly because they answer TEFL-adjacent queries (cost, location, certification type) from a single resource. One URL serves many query angles.

Top takeaways:

1/ Evergreen pages share consistent structural patterns: Category-level guide format (best X for 2025/2026), broad topic coverage within a single page (what is X, how to choose X, top X vendors, pricing), and explicit year anchoring in URL or title. Pages that answer a class of questions earn citation breadth.

2/ The top 5 evergreen pages in every vertical are either comparison roundups, authoritative guides, or directory/listing pages. No thin single-topic page reaches the 11+ prompt tier in any vertical.

3/ A single evergreen page covering 10+ query intents is worth more in AI citation reach than 10 single-intent pages. The ROI of comprehensive content is front-loaded: one well-built page compounds citation reach over time. The long tail exists, but the top 5% of pages capture a disproportionate share of ongoing citation activity.

4/ The ski ramp is steeper in some verticals.

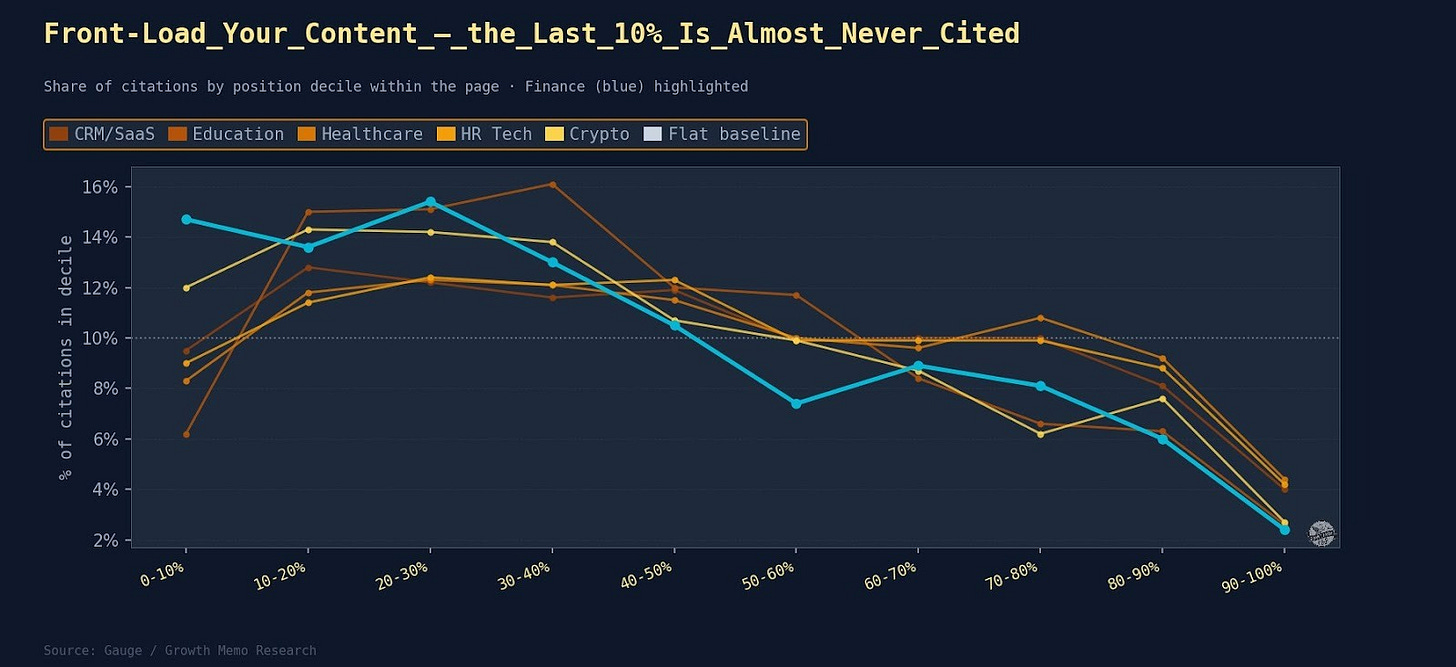

The science of how AI pays attention showed that ChatGPT cites 44.2% from the top 30% of any page. Does that trend hold across different verticals?

Approach: Re-run the same positional analysis across 7 verticals with 42,460 matched citations.

Results: The trend is real but varies by topic. One number holds everywhere: The bottom 10% of any page earns 2.4-4.4% of citations, roughly a quarter of what the peak band earns. The conclusion section is nearly invisible to AI, regardless of vertical.

What the industry patterns showed:

The true peak decile across all verticals is not the very opening. The 10-20% band is where AI reads hardest in every vertical. The first 10% is typically navigation, headlines, and intro fluff that AI skips.

Finance is the extreme case. 43.7% of citations land in the first 30% of the page. Finance pages front-load rate data, percentages, and key figures. AI grabs them and rarely reads past the halfway point.

Healthcare and HR Tech have the flattest ramps. Useful content is distributed more evenly across those pages.

Education peaks at the 30-40% decile rather than 10-20%, because educational content tends to bury the key answer slightly deeper after the intro.

Top takeaways:

1/ Put your most citable claims and data in the first 30% of the page - no matter what industry you’re in. Summaries and conclusions rarely get cited.

2/ For Finance brands: Front-load your thesis and statistics as much as possible.

What this means for how you build LLM visibility

The domains that own citation share didn’t get there by writing better sentences. They built pages that hold true topical authority, addressing multiple queries in one place, and then repeated that authority across enough sub-topics to hold multiple seats at the table.

Getting cited across 30, 60, or 100 distinct prompts requires a targeted content architecture: pages built around query clusters and owning entire topics rather than individual keywords. Teams that keep the traditional “one keyword, one page” model will be structurally locked out of AI citation, even if their individual pages are beautifully written.

But as the data shows, there is no universal playbook. The tactics that work for a broad CRM platform could actively harm a Finance brand.

Methodology:

We analyzed ~98,000 ChatGPT citation rows pulled from approximately 1.2 million ChatGPT responses from Gauge.

Gauge is extending a one-time 75% discount for Growth Memo subscribers to help them grow their AI presence. Mention Growth Memo during a live demo or use GROWTHMEMO at checkout to redeem.

Because AI behaves differently depending on the topic, we isolated the data across 7 distinct, verified verticals to ensure the findings weren’t skewed by one specific industry.

Analyzed verticals:

B2B SaaS

Finance

Healthcare

Education

Crypto

HR Tech

Product Analytics

To reverse-engineer the citation selection, I ran the data through several layers of analysis:

Structural parsing: I measured the raw character length of every cited page and mapped heading hierarchies (H1s, H2s, H3s) to see how information architecture impacts visibility.

Positional mapping: I used Jaccard sliding-window similarity to pinpoint exactly where on the page the AI extracted its answers from, down to the specific decile.

Entity & Sentiment extraction: I ran the opening text of unique cited URLs through the Google Natural Language API to classify named entities (dates, prices, products) and used TextBlob to score sentiment, comparing the performance of corporate content against user-generated content (UGC).

For premium subscribers: Your citation audit is below.

Most teams will see the 30-domain concentration figure, recognize they’re not in it, and move on without changing how they build content. Single-intent pages will keep shipping. Topic coverage gaps will stay unaddressed.

Premium subscribers get the tool to help change that: A citation-readiness checklist that scores your current content setup against the 4 signals from this analysis - domain footprint, page length by vertical, evergreen URL structure, and positional front-loading.