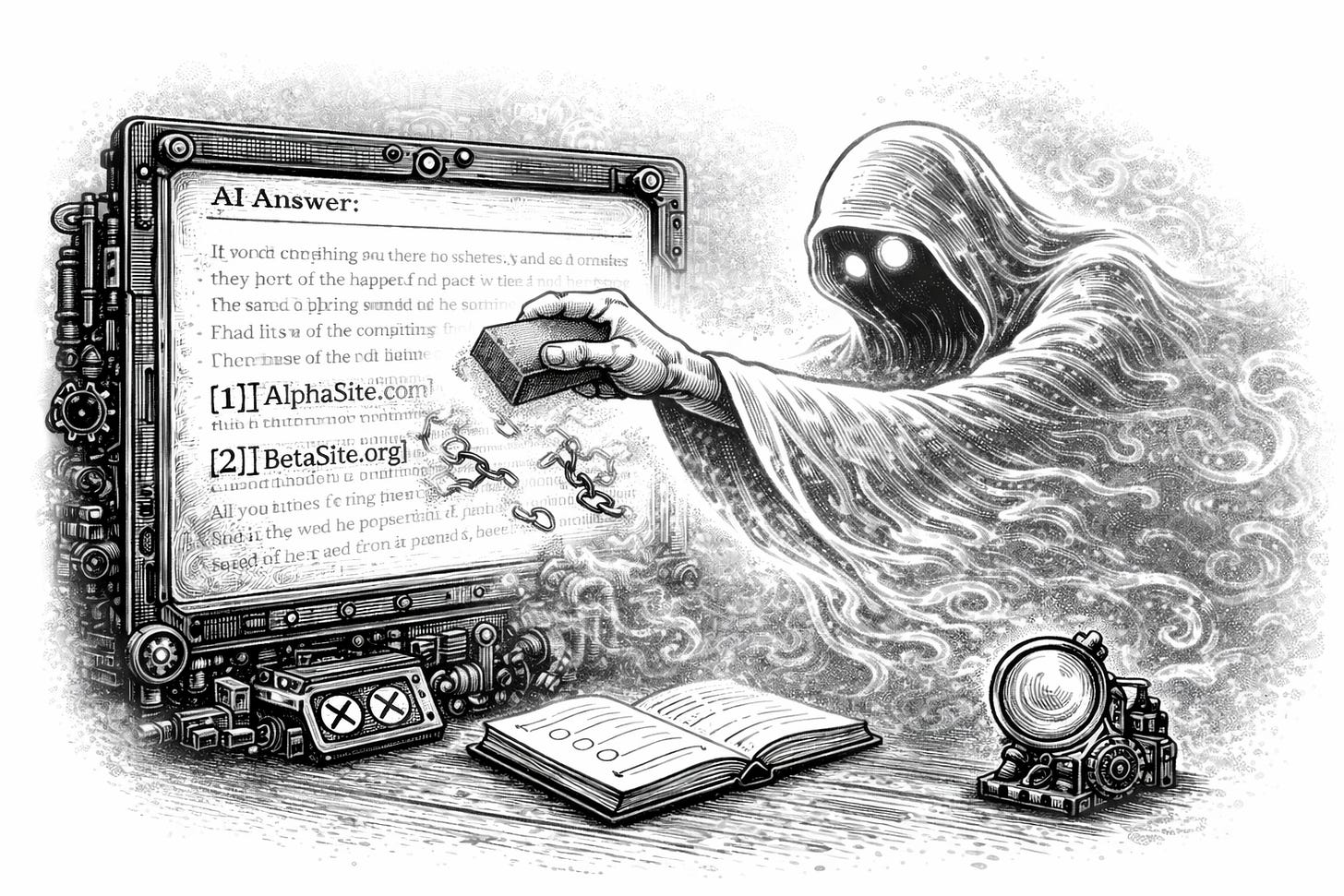

The ghost citation problem

New data from 3,981 domains across 115 prompts, 14 countries, and 4 AI search engines

This Memo was sent to 26,187 subscribers. Welcome to +95 new readers! Subscribe to get the free memo weekly or upgrade to Premium for the full archive, research, frameworks, and templates.

When an AI …